What I learned through this project:

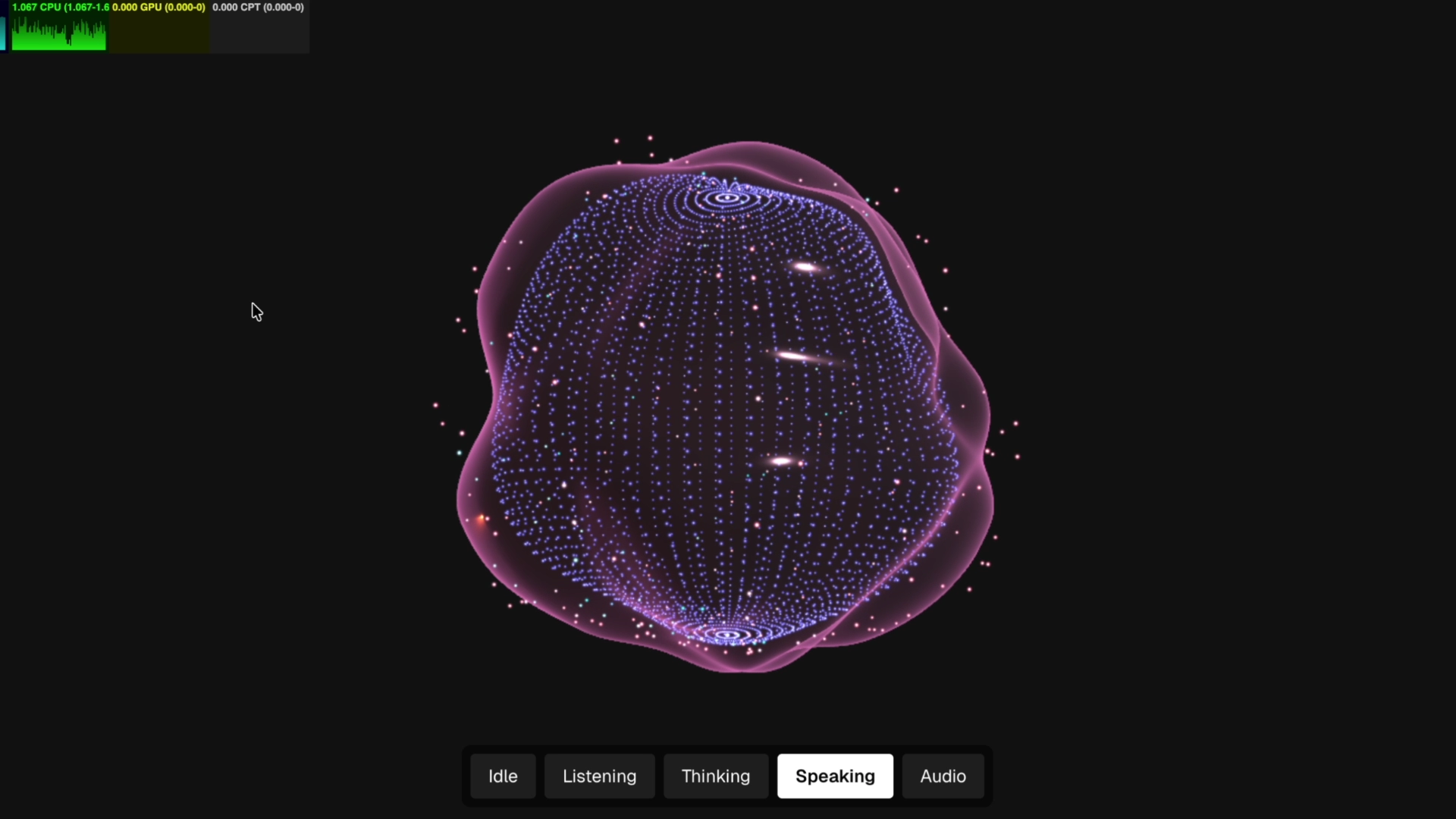

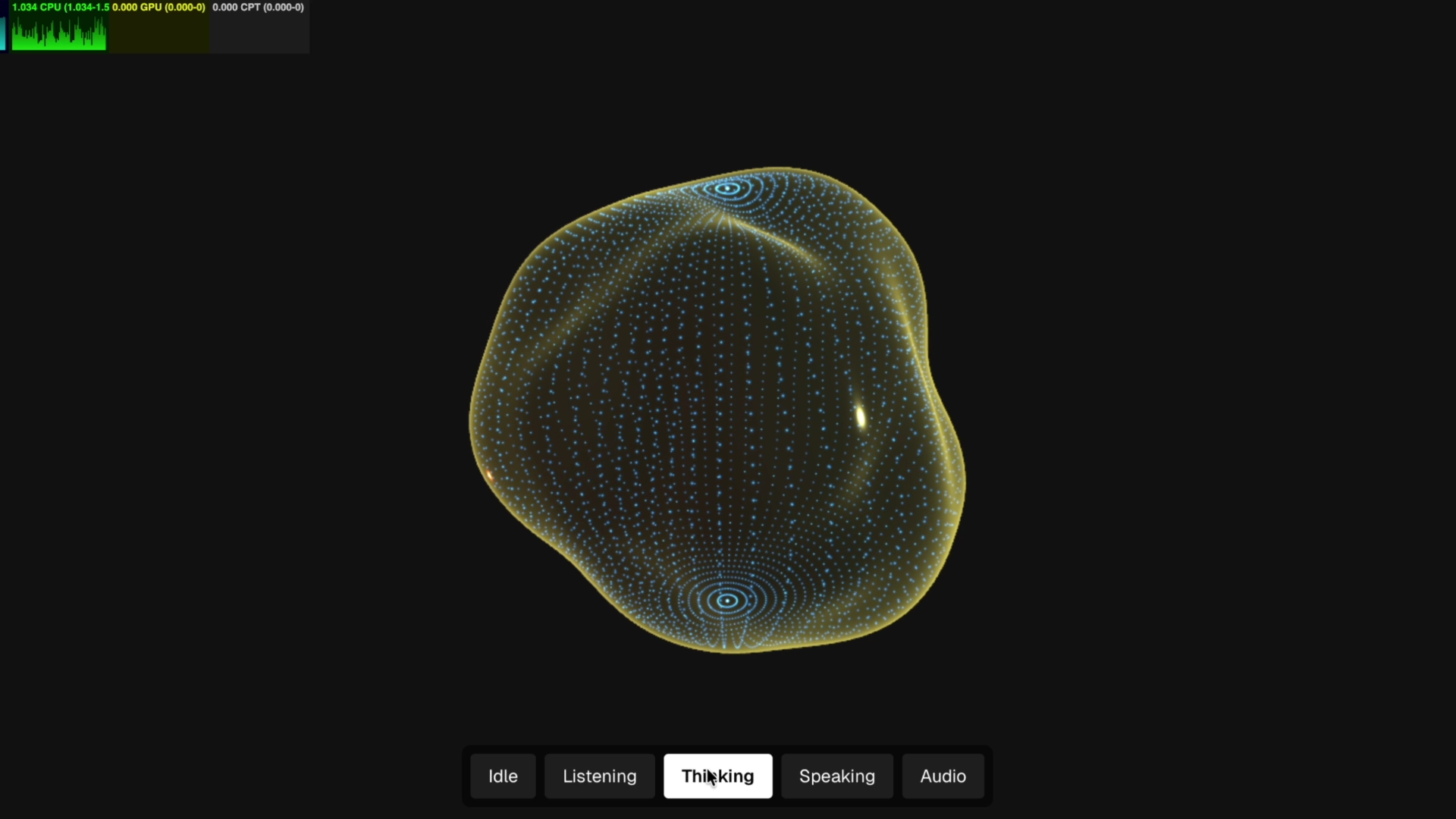

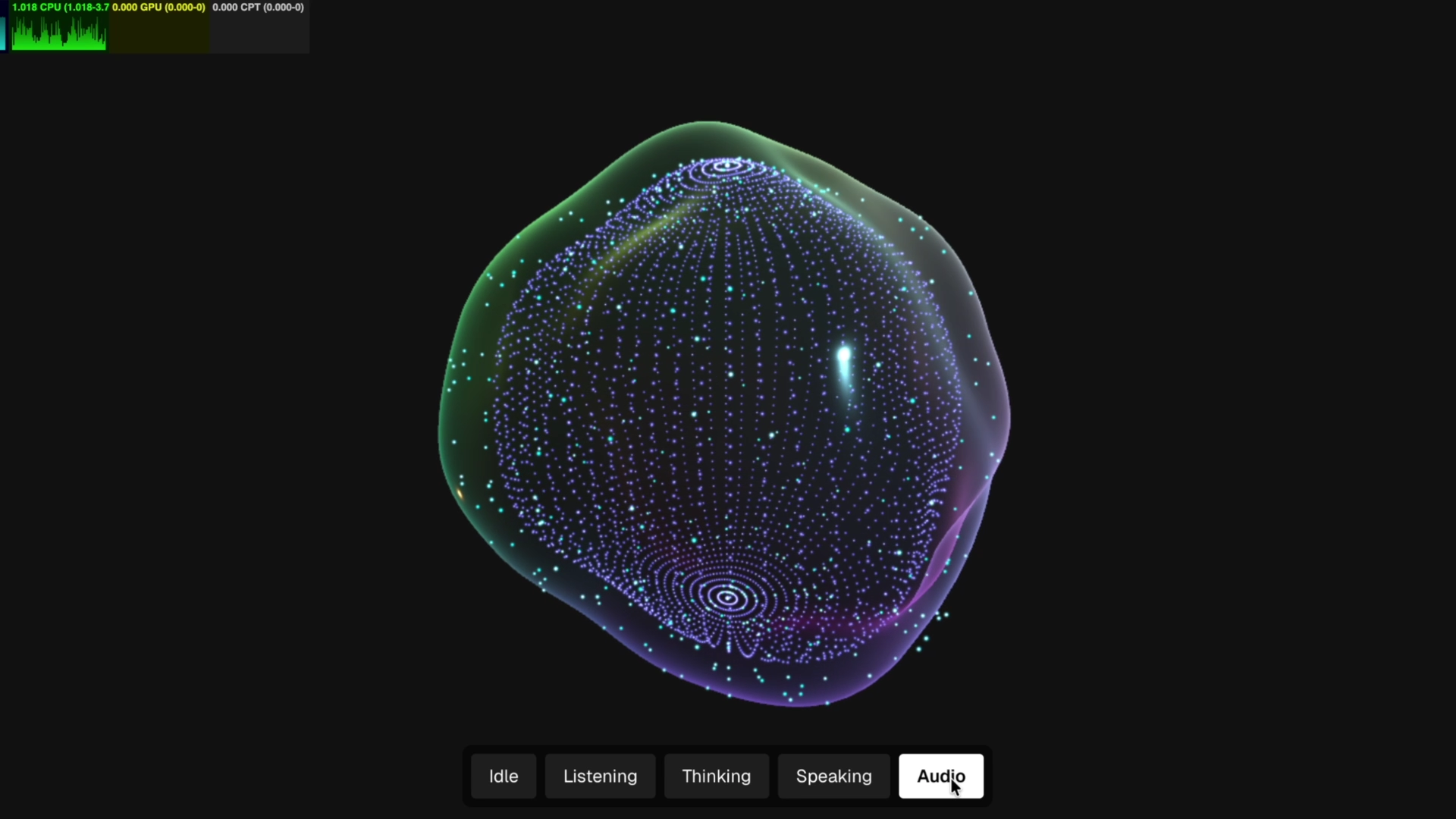

Uniform-driven state machine

Implemented a uniform-driven state machine controlling multiple visual modes — Speaking, Thinking, Audio Listening, and Idle. Each state modifies shader uniforms, deformation intensity, glow behavior, and particle motion patterns in real time.

Procedural displacement logic

Custom shader-based procedural displacement logic manipulates vertex positions of both particles and the central sphere. Audio frequency data is mapped to GPU uniforms, driving dynamic deformation, pulsation, and distortion effects.

Dual system: particles + glow sphere

The scene combines a GPU-accelerated particle field and a glowing central sphere. Both systems are synchronized through shared uniform inputs, creating a cohesive audio-reactive visual composition.

WebGPU rendering & performance optimization

Built on WebGPU with custom TSL shaders, the rendering pipeline is optimized for stable frame rates despite complex deformation, state transitions, and continuous audio input processing.